There are certain terms that people side step in a statistics class. And by “side step” I mean once you hear the terminology you go ‘uh right’ and rush passed it. Or you recite the definition like if I say this five times and click my heels- I will get out of this place. I am sure you have heard of Type I and Type II error. (Honestly, even as I type the terminology I want to forget I said it).Error is not something instinctual. You either know what causes it or you do not have a clue. Personally, I managed to dodge it for quite a few statistics classes but anyway…here it goes…

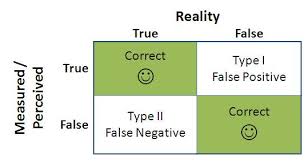

Type I error is you reject a null hypothesis when it is true. So you conduct a null hypothesis and you cheer when it comes up as p<0.05 when actually the null is true. An example of a type I error would be a diagnostic test that detects a positive result when the patient does not have disease (false positive). A type II error is when you fail to reject a null hypothesis when it is actually false. An example of a type II error is when you obtain a negative test result for a patient who has disease (false negative result).

Now Type I error is covered in the alpha or α of a null hypothesis test. So another words, if your alpha level is 0.05 you have a 5% chance of rejecting the null when it is true. So any hypothesis test will account for Type I error. However the Type II error is accounted for when you calculate power of the test. Power of the test tells the researcher the accuracy of your hypothesis test. Power is equal to 1-β , which represents the probability you correctly reject the null hypothesis.

Real Talk: In a statistics 101 course you are expected to do calculations. You won’t be expected to calculate β. However,as a researcher you will be expected to know the power of the test. People want to know the accuracy of your hypothesis. So it helps to be aware of the different types of error. This is just two types there are others.

If you enjoyed this blog, there are more to follow-Amy

Leave a Reply